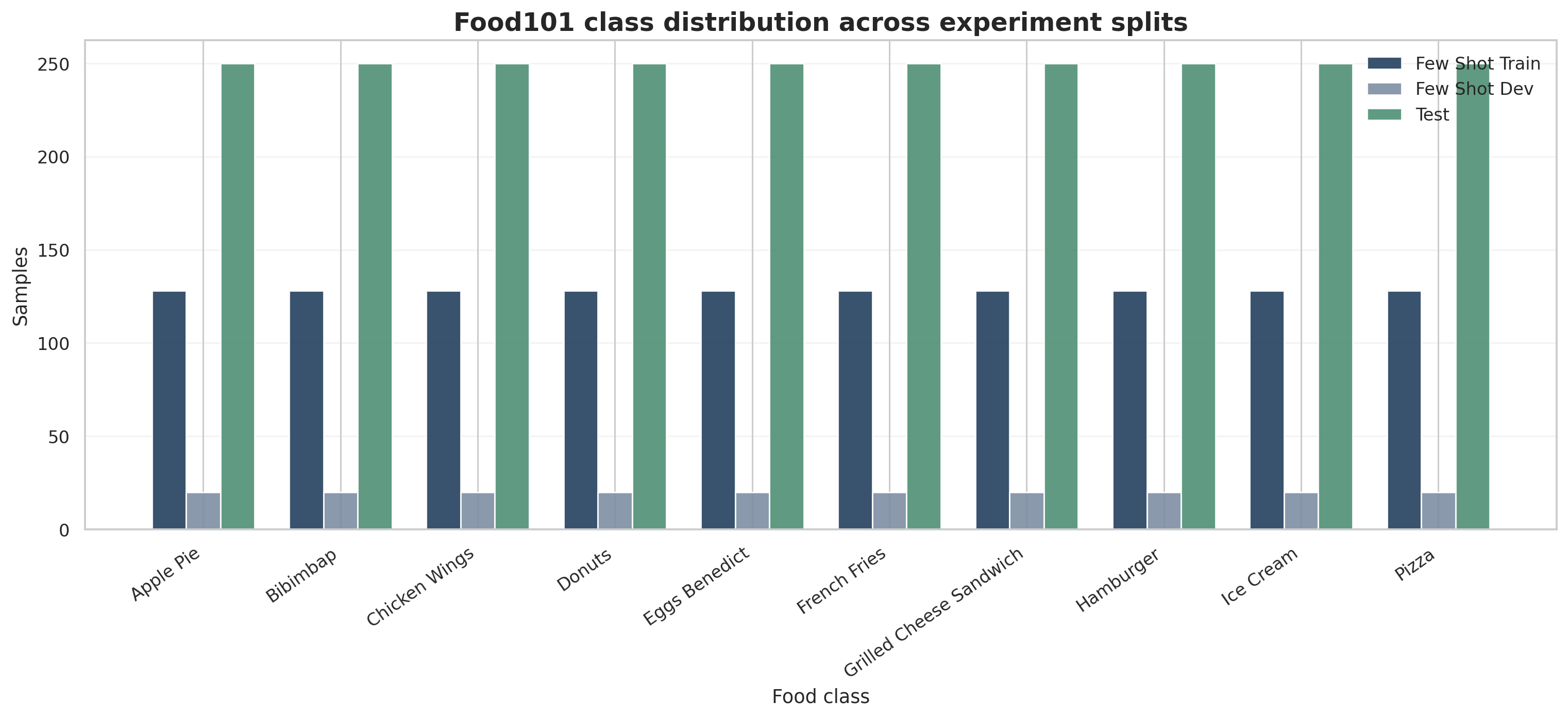

Ten classes, each with exactly 1,000 images before few-shot slicing.

Assignment 1 / Multimodal / Dataset EDA

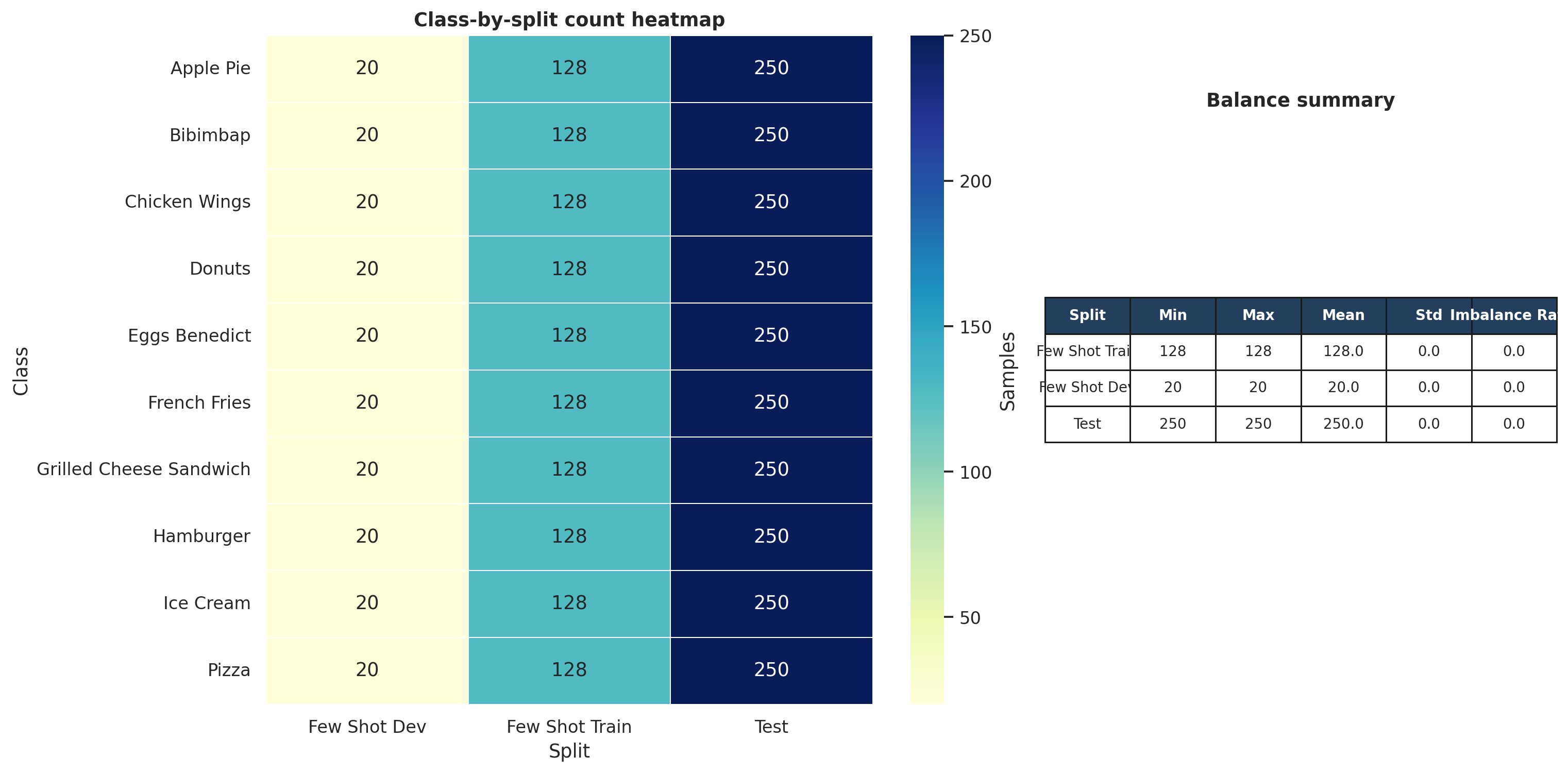

10-Class Filter Balanced Few-Shot SplitsFood101 becomes a controlled ten-class benchmark.

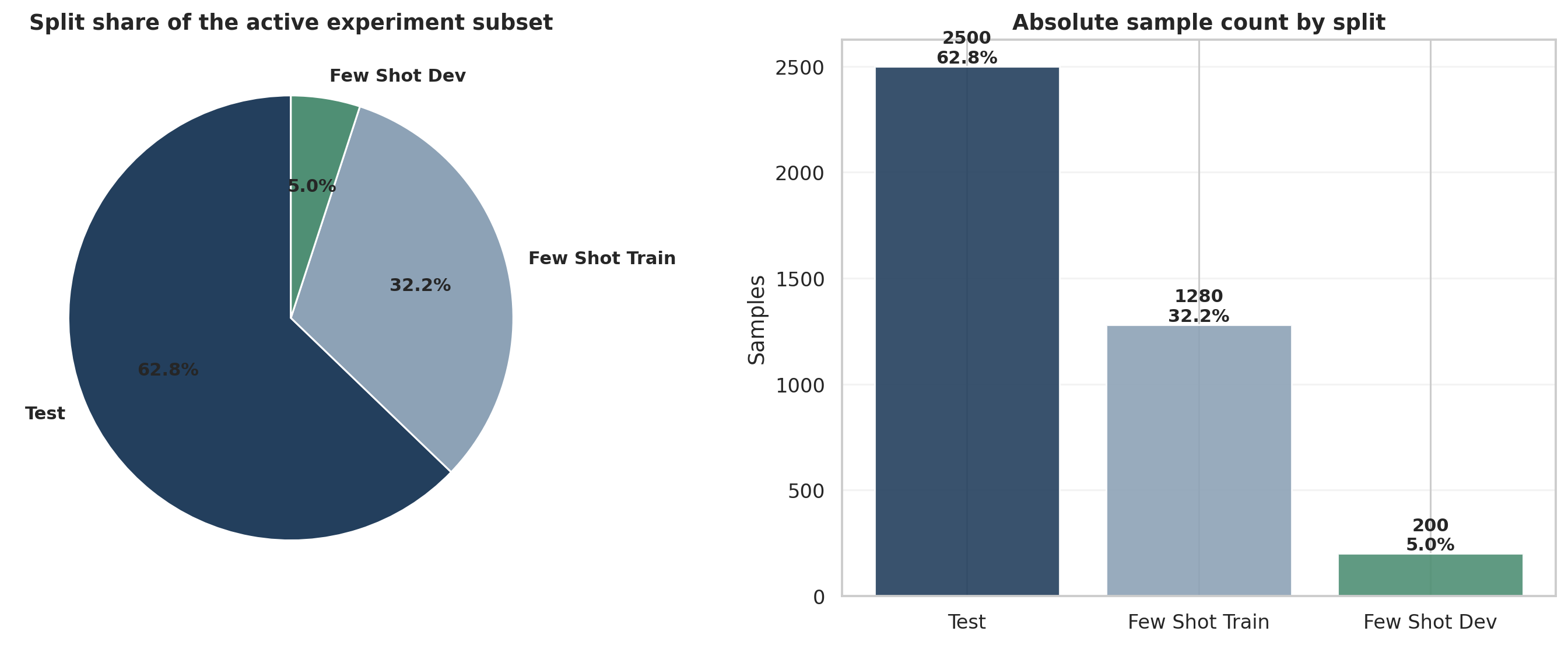

The multimodal experiment narrows Food101 to ten visually distinct yet semantically overlapping food categories, then constructs balanced few-shot train and validation subsets from the official training split while preserving the full official validation split as test. This makes the evaluation stable and makes method differences easier to attribute to representation quality rather than data skew.

Dataset framing

The filtered subset contains 7,500 training images and 2,500 test images, exactly 1,000 images per selected class overall. Each few-shot configuration draws balanced support sets from the filtered training pool, while the test split stays fixed at 250 images per class.

Official Food101 train split after class filtering.

Official validation split, preserved in full for stable reporting.

The working subset is perfectly balanced by design across all active classes.

How the few-shot subset is built

| Component | Definition |

|---|---|

| Original source | `ethz/food101` with 101 classes and official train / validation splits. |

| Selected classes | apple pie, bibimbap, chicken wings, donuts, eggs benedict, french fries, grilled cheese sandwich, hamburger, ice cream, pizza. |

| Filtered train pool | 7,500 images total, 750 images per class. |

| Filtered test pool | 2,500 images total, 250 images per class. |

| Validation budget | 20 images per class for every few-shot setting. |

| Shot settings | 8, 16, 32, 64, 128 images per class for few-shot training. |

| Random seed | 42 for deterministic support and validation sampling. |

Every active split is deliberately balanced

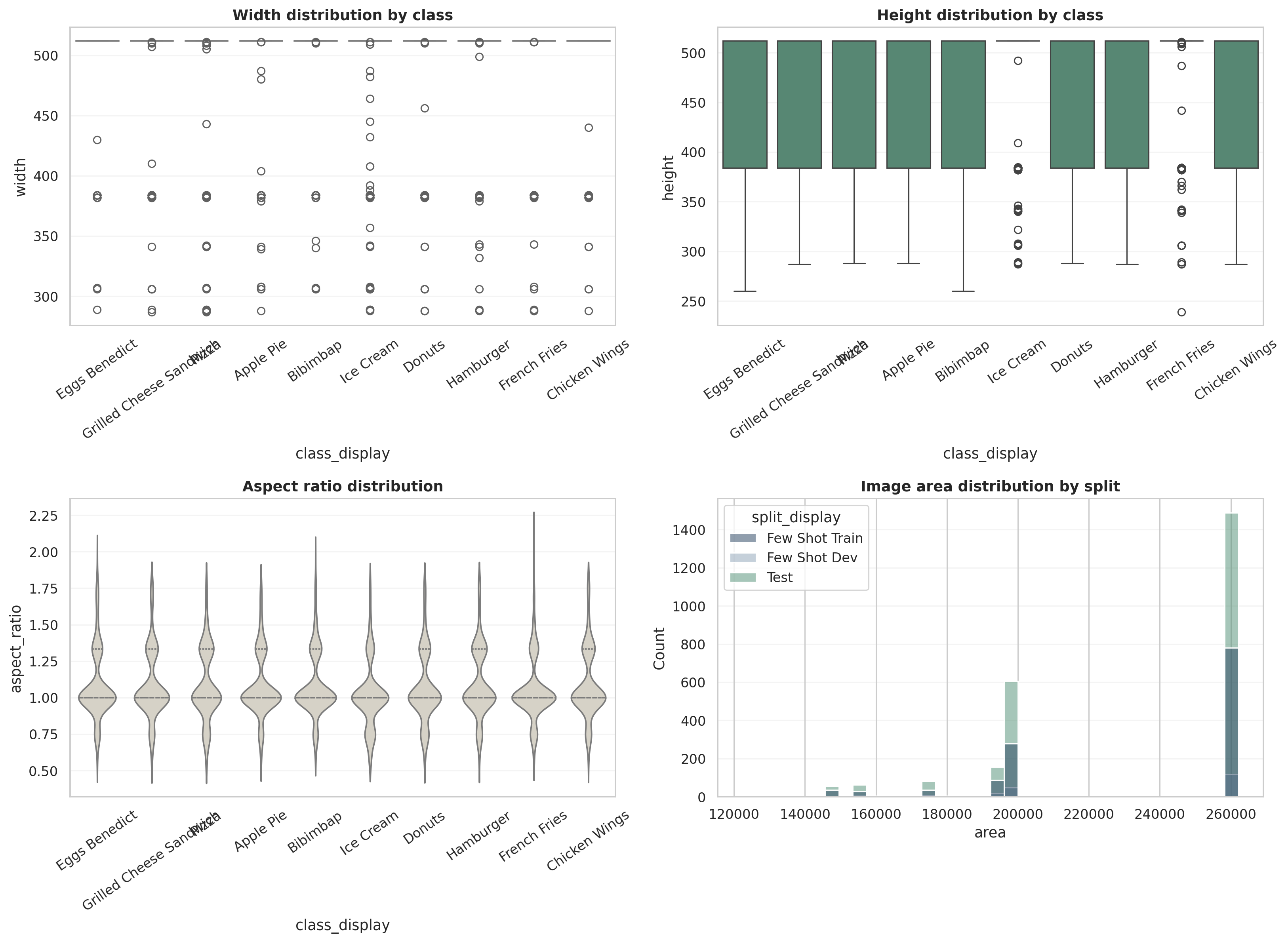

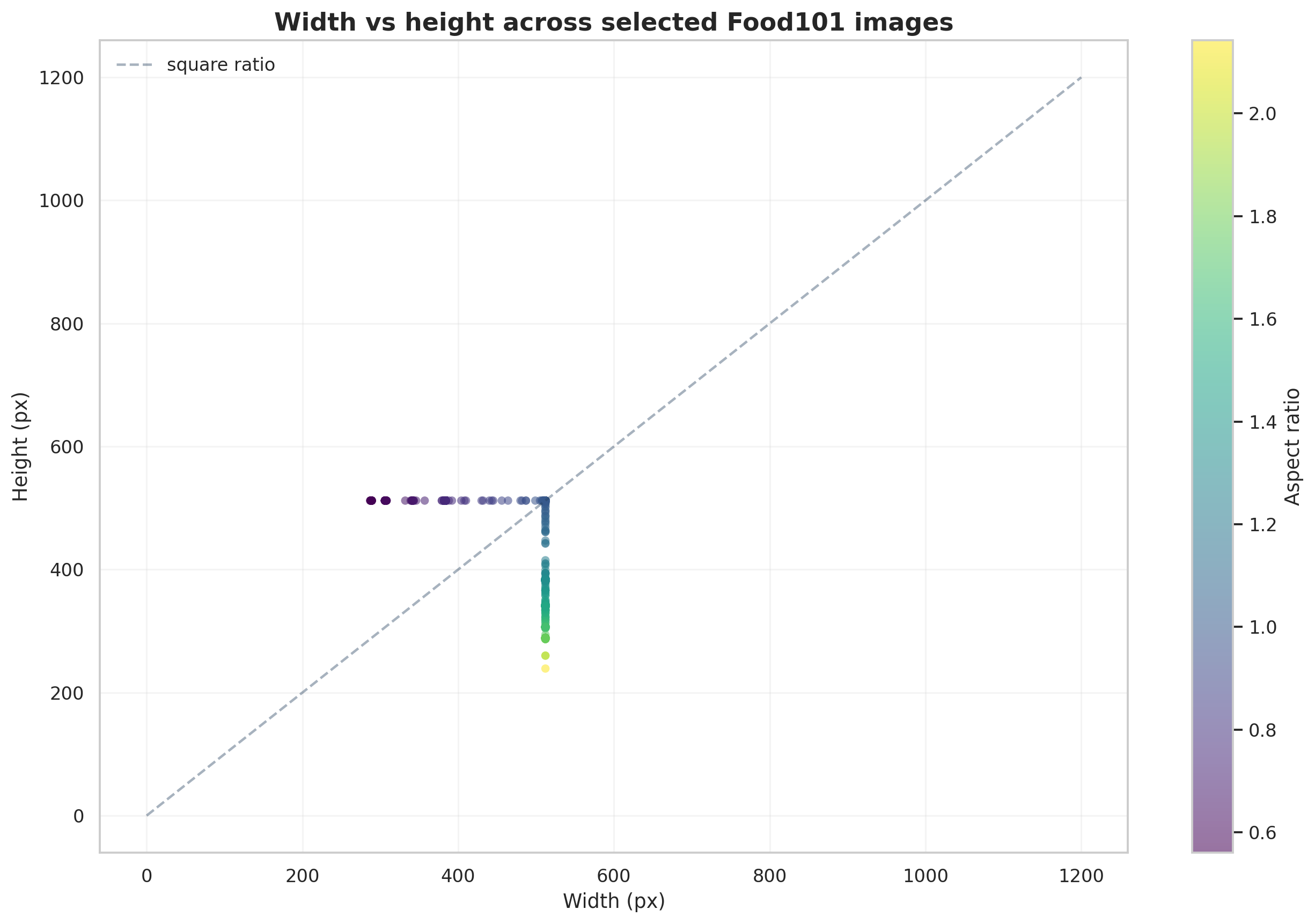

Food101 is close to square, but not uniform

Most Food101 images live near a 512-pixel square frame, but aspect ratio still ranges widely enough to matter for crop policy. Elongated plates, tall plated burgers, and tightly framed desserts all stress a naive resize-plus-center-crop pipeline differently.

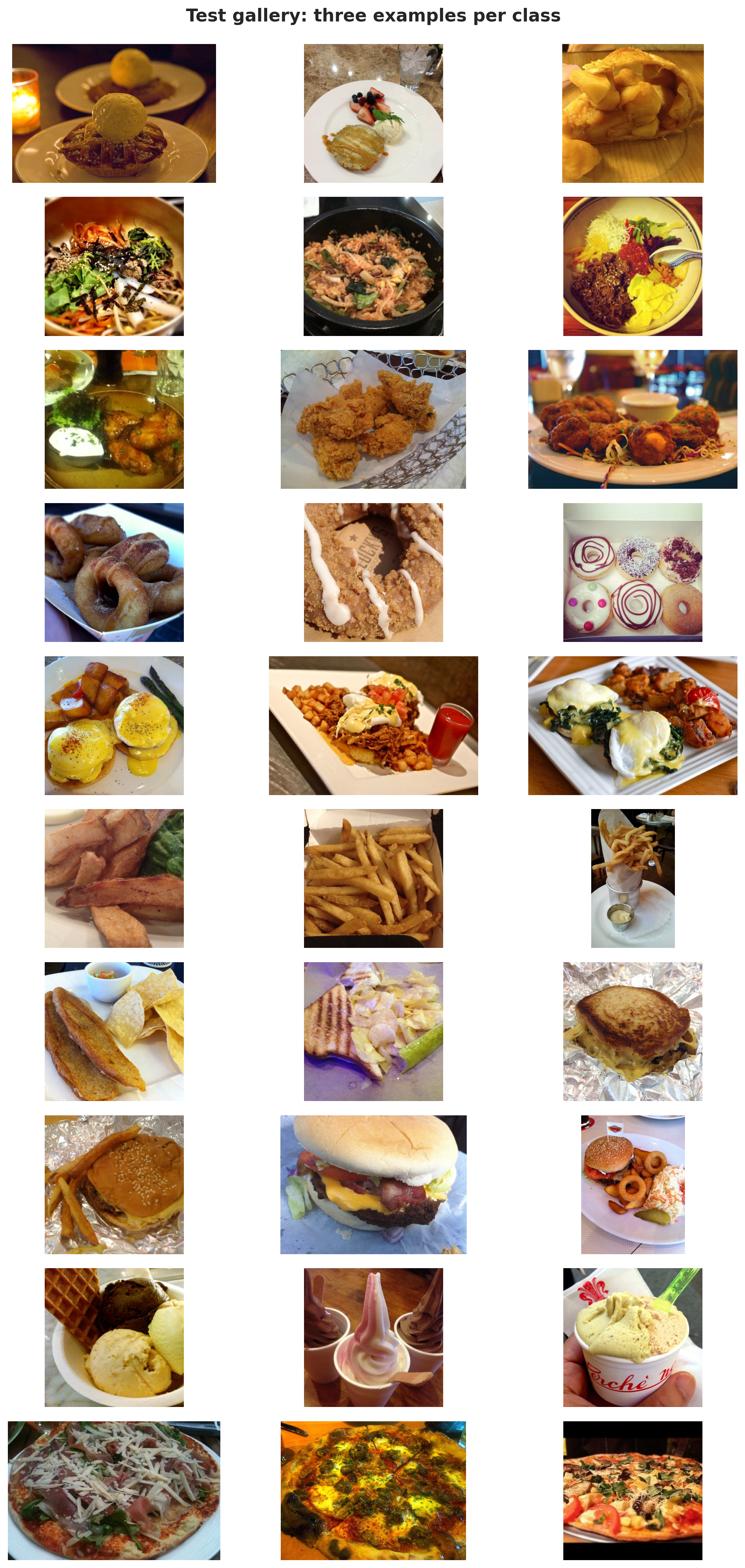

Visual variation remains high inside each class