Apple pie, bibimbap, chicken wings, donuts, eggs benedict, french fries, grilled cheese, hamburger, ice cream, pizza.

Assignment 1 / Multimodal Track

Food101 x CLIP Latest Run 2026-04-06One encoder, three decision routes, ten food classes.

This report documents the full multimodal pipeline for `ethz/food101`: preprocessing the 10-class subset, extracting CLIP embeddings, evaluating zero-shot prompting, adapting with CoOp learnable context, and benchmarking against a few-shot linear probe. The layout has been condensed into a single continuous reading surface so every plot, table, and failure case sits in one consistent visual system.

Current system state

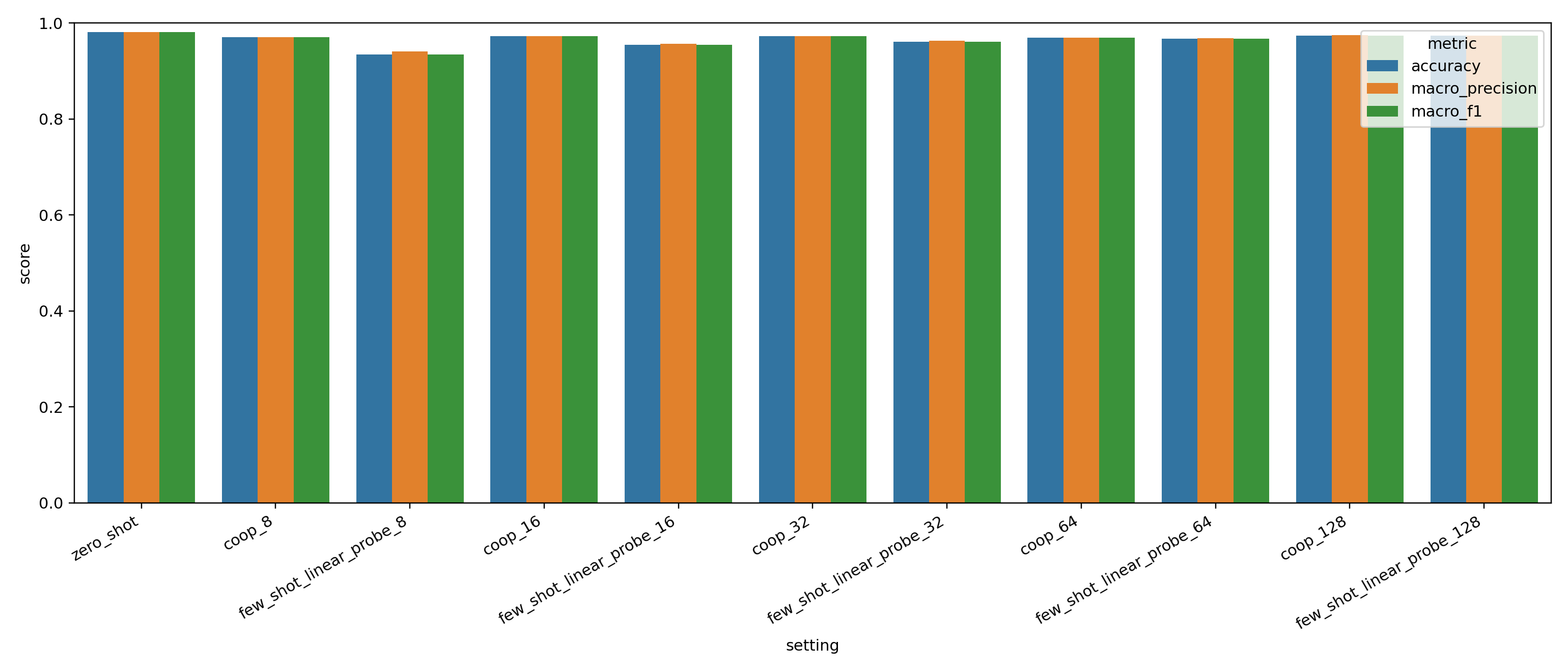

The active experiment filters Food101 to 10 balanced classes, preserves the official validation split as the held-out test set, and evaluates three classification strategies over shared CLIP image embeddings. Zero-shot is still the strongest overall route in this run, but CoOp narrows the gap and outperforms the linear probe at low-shot settings.

Filtered from the official Food101 train and validation splits.

Zero-shot CLIP remains the strongest final method in the latest run.

CoOp at 128 shots edges the linear probe at the highest support budget.

What changed in the latest report

- Zero-shot now represents each class with the average of five prompt embeddings before computing image-text similarity.

- Few-shot comparison now includes both a linear probe and CoOp prompt tuning with learnable context tokens.

- Evaluation exports confusion matrices, top-failure tables, CoOp token decoding, and saliency maps into the docs asset bundle.

- All current plots, metrics, CSV tables, and failure visualizations have been copied into `docs/assignment-1/multimodal/assets/results` for publication.

Navigate the full-width report

Dataset EDA

Split design, geometry variation, class balance, and representative samples from the 10 selected classes.

Model Backbone

Shared CLIP encoder, prompt ensemble for zero-shot, prompt learner for CoOp, and the linear-probe alternative.

Methodology

Config surface, run order, split recipe, optimizer settings, and why the frozen-encoder design is useful here.

Evaluation Results

Metrics table, confusion matrices, CoOp token decoding, and high-confidence failures with gradient saliency maps.

Published files

The docs now ship with the latest run bundle, including the evaluation summary, metrics CSVs, confusion matrices for zero-shot, CoOp, and linear probe, decoded CoOp context vectors, and saliency maps for the top failed predictions.

Interactive experiment report

The full training log, method comparison, and tracked artifacts are also published in WandB for an interactive version of the report.